A journey through end-to-end testing

Software Engineer (Mobile Specialist) at The Athletic and an aspire writing at Hashnode with 8+ years of experience. Focused on Mobile technologies like Android, iOS, and hybrid solutions as Flutter.

There's no consensus about test naming patterns, usually, we have different terms to represent the same test strategy, which makes it valuable to have team discussions to create a test strategy ubiquitous language.

What end-to-end (E2E) means? I know what it doesn't mean, regression tests. They are confused with each other all the time, and that's ok, as e2e's are part of the regression test suite and have the objective to check if a bug was introduced after a change, but keep in mind that regression test involves the execution of the entire test suite, which includes the ent-to-end ones.

End-to-end tests can be also referred to as Broad Stack Tests and basically imply that we are testing some part of the system entirely, fully integrated, which makes them expensive when automated. E2E can be structured in a bunch of different ways, but let's focus on two of them, Journey Tests and Smoke Tests.

Journey Test

Journey tests are also kinds of Business Facing Tests and have the intent to simulate real-world scenarios consisting of the execution of user journeys inside the app. The idea is to cover the whole application, checking how different components interact and whether the system behaves correctly in complex scenarios.

Automated journey tests can also be implemented using the Component Test strategy, offering advantages over traditional end-to-end (E2E) tests. Component tests are generally faster to execute and maintain because they leverage test doubles to isolate dependencies, particularly those requiring network calls, thereby reducing test flakiness. However, even with this approach, component tests can be expensive to create, so be assertive with them.

Smoke Test

First of all, we are not planning to stress any device until we see it smoking... The Smoke Test term comes from the idea of turning on a piece of electronic equipment for the first time to see if it starts smoking due to a major flaw, indicating that there might be a serious issue with the hardware.

In software, a smoke test is a kind of end-to-end test that consists of executing some user journeys on the application to check if the basic functionalities work, which means that smoke tests can be seen as a small version of User Journey Tests. The selected journey depends on how critical is the given feature to the system.

Keep in mind to create them using the E2E strategy, even if that means "more expensive", once the idea is to test just the happy path of a few user journeys.

How to write

Automated or not, test scenarios also serve as documentation to the system, which means that we should care about well-defined scenarios and readability, making them comprehensive not only for engineers but also for stakeholders.

Well, readability may depend on the audience, however, we can tailor the message to make it niche or broadly comprehensive by changing its structure according to the target readers.

We can achieve our readability goal by using the gerkin syntax to structure our test scenarios using the keyword Given, When, And, and Then to represent each step of the user journey in the app. While Gherkin is commonly used with Behavior-Driven Development (BDD), it's not a strict requirement. Both Gherkin syntax and BDD are valuable practices, but their applicability depends on the team context.

Here's how we can structure a test scenario using Gherkin:

Given my application is installed

And I tap on the app icon

When the app is launched

Then I see the Login screen

The scenario above can be executed manually, by automation, or both. But it's important to have these scenarios documented somehow, in a spreadsheet, a Confluence page, or any kind of document that anyone in the team can have access to reproduce the test step or search for some business rule in the system.

What about automating these kind of test scenarios? Well, the range of possibilities is huge, which also depends on the platform, however, we can establish some automation rules that you can follow, no matter the platform, framework, etc.

Automation Rules

Given my application is installed

And I tap on the app icon

When the app is launched

Then I see the Login screen

Looking into our example scenario above, It's possible to extract some rules from it and infer some others. Some of the following rules may seem to be obvious, but what's obvious for you may not be to another person, so let's write them down.

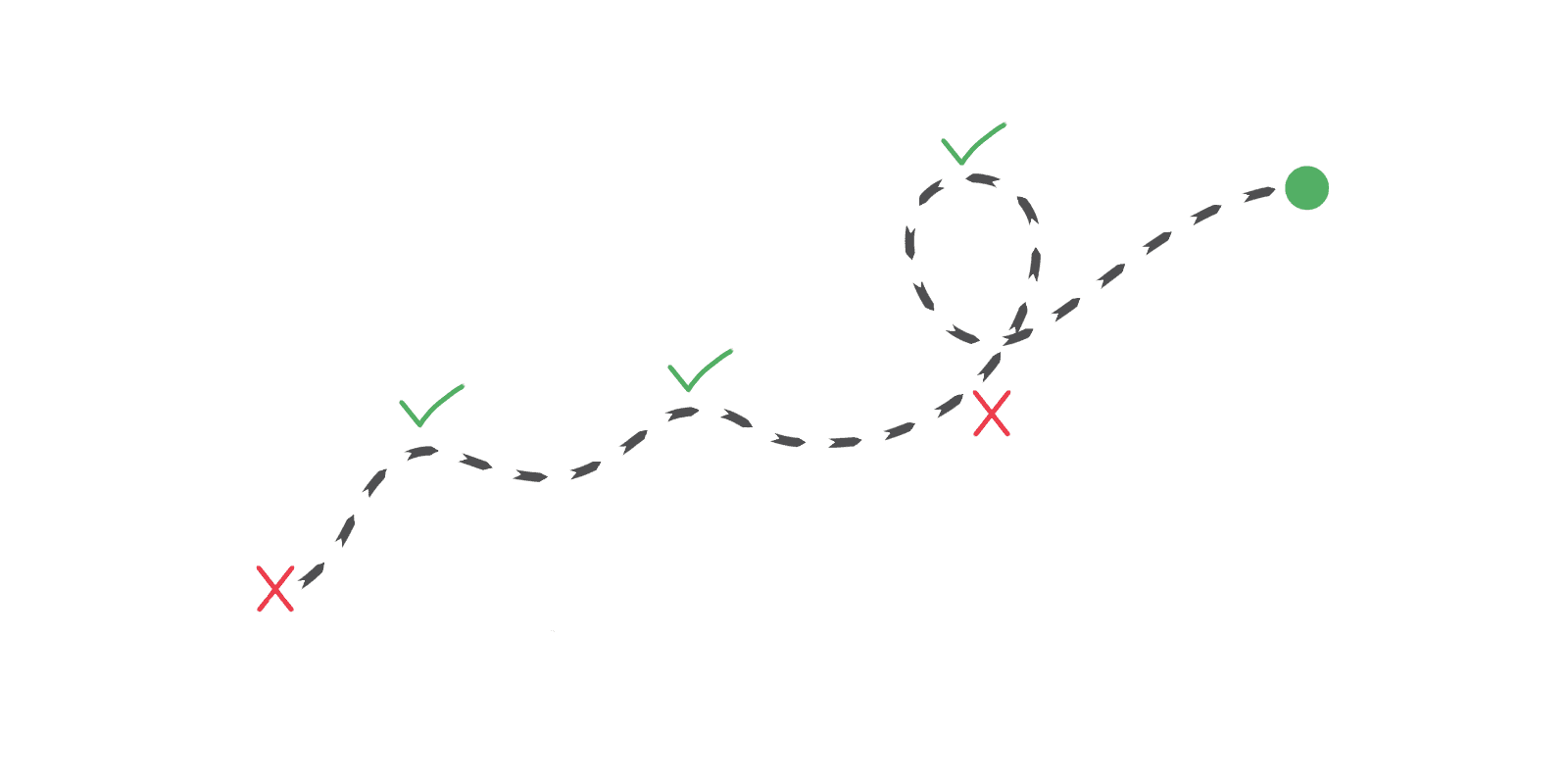

Ordered steps Test scenarios are composed of ordered steps. A user journey changes according to the user steps in the application. A different step sequence means a different test scenario.

One step, one assertion Asserting each step of the user journey helps to find the exact step that caused some test failure, improves test logging, and increases the step reusability, once this practice collaborates with the single responsibility principle.

Logging matters Each step assertion should print an execution result describing the test step, e.g.

And I tap on the app icon - SUCCESS.Ordered Scenarios As we're simulating the real world, it should not be possible to open the account screen before authenticating a user for example (If the application requires authentication, of course).

Journey test automation articles

References

- User Journey Test Guide - Dominik Szahidewicz

- User Journey Tests - Martin Fowler

- Business Facing Test - Martin Fowler